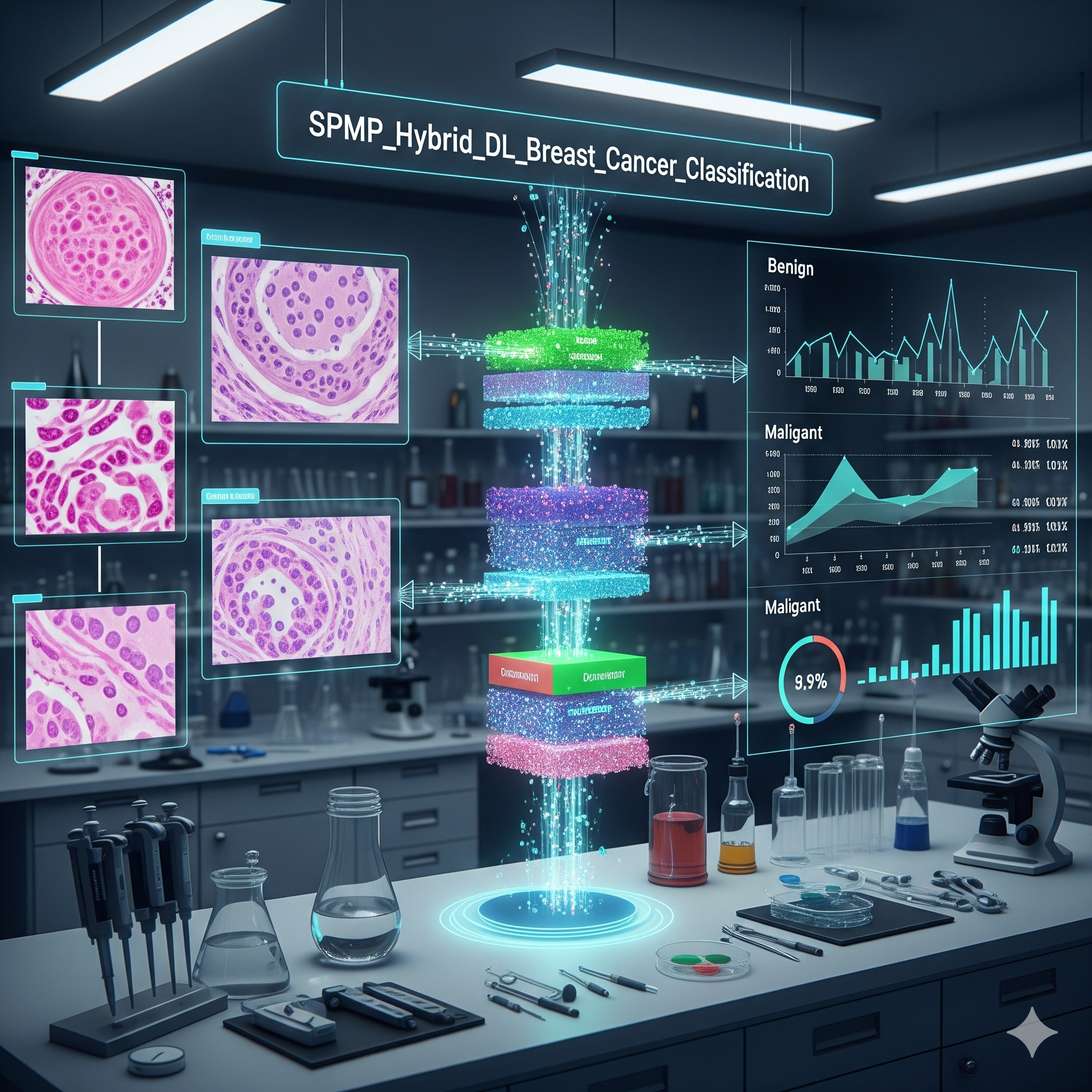

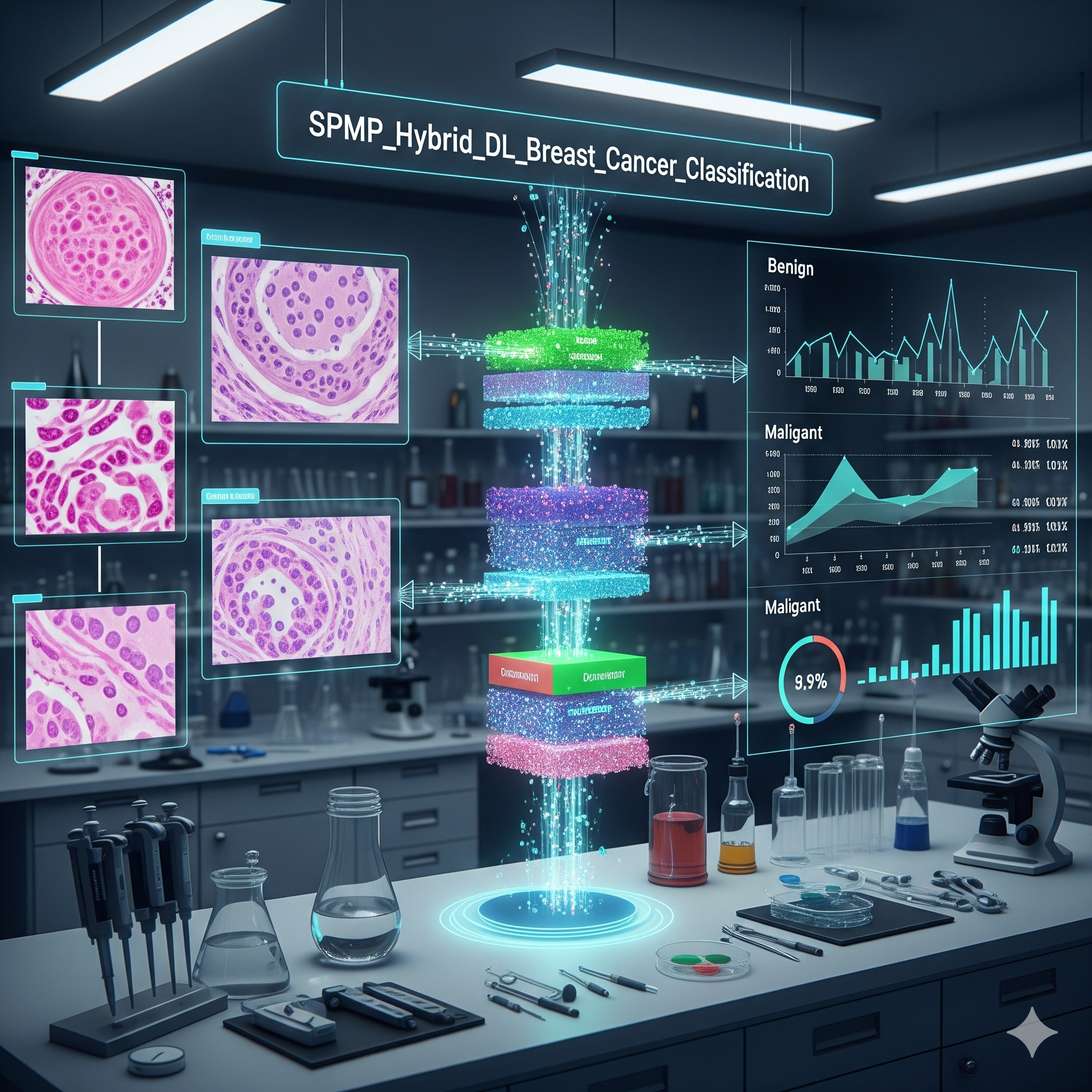

A hybrid deep learning model for breast cancer detection — fusing mammographic and sonographic image features for multi-modal classification.

AI / ML

A Final Year Project building a multi-modal neural network that fuses information from two imaging modalities — mammograms and ultrasound — to improve diagnostic accuracy.

A hybrid Convolutional Neural Network (CNN) designed to improve breast cancer classification by fusing features extracted from two imaging modalities — mammograms and ultrasound scans. The goal is to leverage complementary information across modalities to achieve higher diagnostic accuracy than single-modality approaches.

Single-modality classification models miss information that's only visible in the other imaging type. Combining features from two fundamentally different image types — with different resolutions, orientations, and noise profiles — requires careful architecture design and feature fusion strategies.

The architecture uses two separate CNN branches — one for mammographic images, one for sonographic — with a fusion layer that concatenates extracted features before the final classification head. Transfer learning from pre-trained weights provides a strong initialization for each branch.

A multi-modal deep learning approach to breast cancer detection — bridging two imaging domains for improved classification.

Modeling

Data

Evaluation

A research-grade deep learning pipeline approaching a real clinical problem.

Separate convolutional branches process mammographic and sonographic inputs independently before fusion.

Pre-trained weights provide strong initialization for each branch — reducing training data requirements.

Evaluated using accuracy, F1, ROC-AUC, and confusion matrix — appropriate for imbalanced medical datasets.